Global teams increasingly face language barriers in online meetings. To solve this, ImmersiveQuest (a subsidiary of ImmersiveData.ai) developed a real-time, bidirectional speech translation solution for Huro AI. The tool allows users to speak in their native language and have their message translated and spoken back in the listener’s language—without interrupting the natural flow of conversation.

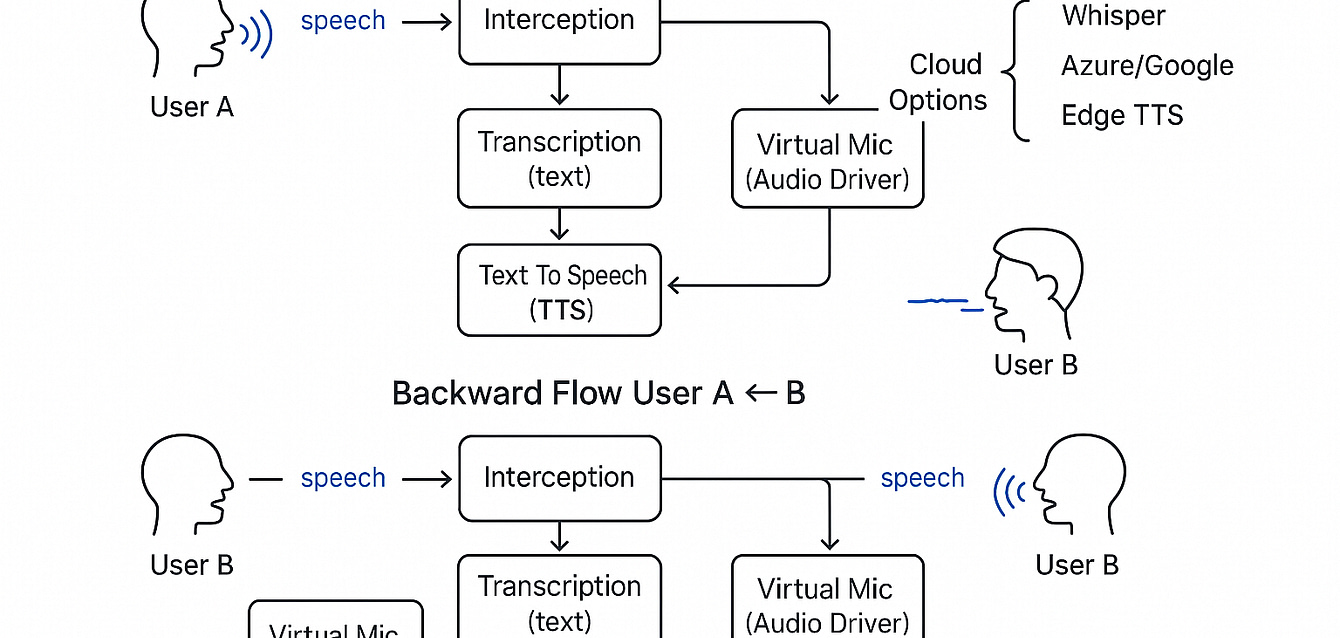

The system comprises several modules:

Speech-to-Text (STT): Using Whisper or Vosk for low-latency speech recognition

Translation Engine: Leveraging MarianMT transformers for real-time translation

Text-to-Speech (TTS): Generating speech output via Coqui-TTS or Edge APIs

Audio Interception & Injection: Seamless integration into any video conferencing platform using VB-Cable or virtual drivers

UI Controls: User-friendly dashboard for language selection and toggling features

The architecture is designed for plug-and-play integration, requiring no modification to the host application. Optimized for latency and accuracy, it supports over 30 language pairs.

Results:

Real-time translation under 1.5 seconds latency

80% increase in cross-language meeting participation

Custom language models added for domain-specific use (legal, healthcare, etc.)

This project showcases how AI can empower inclusive communication at scale—removing linguistic barriers and enabling seamless multilingual collaboration across geographies.